On the Comparability and Optimal Aggressiveness of the Adversarial Scenario-Based Safety Testing of Robots

Type I and Type II differences.

Type I and Type II differences.Abstract

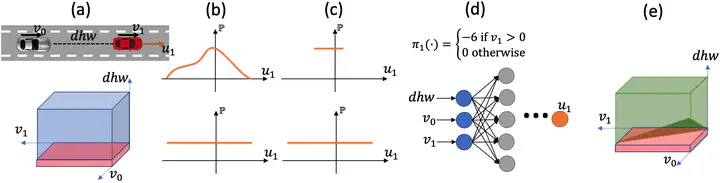

This article studies the class of scenario-based safety testing algorithms in the black-box safety testing configuration. For algorithms sharing the same state–action set coverage with differ- ent sampling distributions, it is commonly believed that prioritizing the exploration of high-risk states and actions leads to a better sam- pling efficiency. Our proposal disputes the above intuition by intro- ducing an impossibility theorem that provably shows that all the safety testing algorithms of the aforementioned difference perform equally well with the same expected sampling efficiency. Moreover, for testing algorithms covering different sets of states and actions, the sampling efficiency criterion is no longer applicable as different algorithms do not necessarily converge to the same termination condition. We then propose a testing aggressiveness definition based on the almost safe set concept along with an unbiased and efficient algorithm that compares the aggressiveness between testing algorithms. Empirical observations from the safety testing of bipedal locomotion controllers and vehicle decision-making modules are also presented to support the proposed theoretical implications and methodologies.